Conversational AI with Language Models: From Architecture to Enterprise

1. What Is a LLM and How Does It Power Conversational AI?

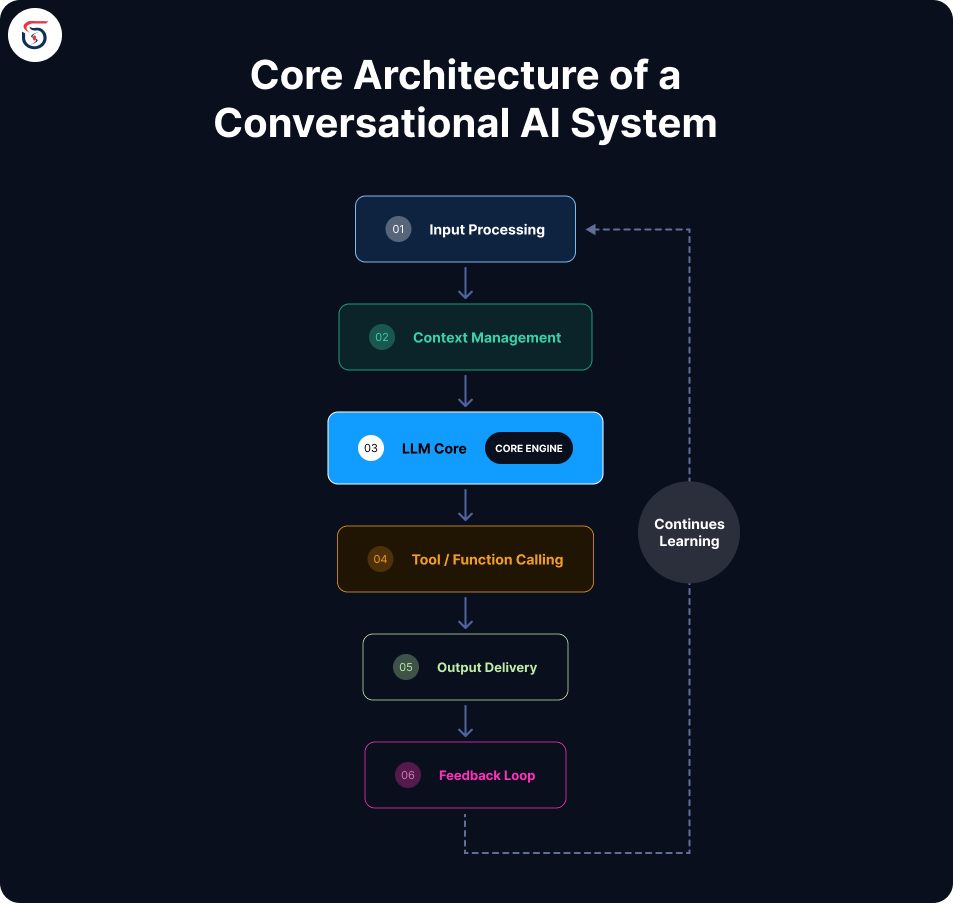

2. Core Architecture of a Conversational AI System

3. Top Companies Offering Conversational AI Solutions Powered by LLMs

4. How to Integrate Conversational AI with Popular Language Model APIs

5. How Advanced Language Models Enhance User Experience in Chatbots

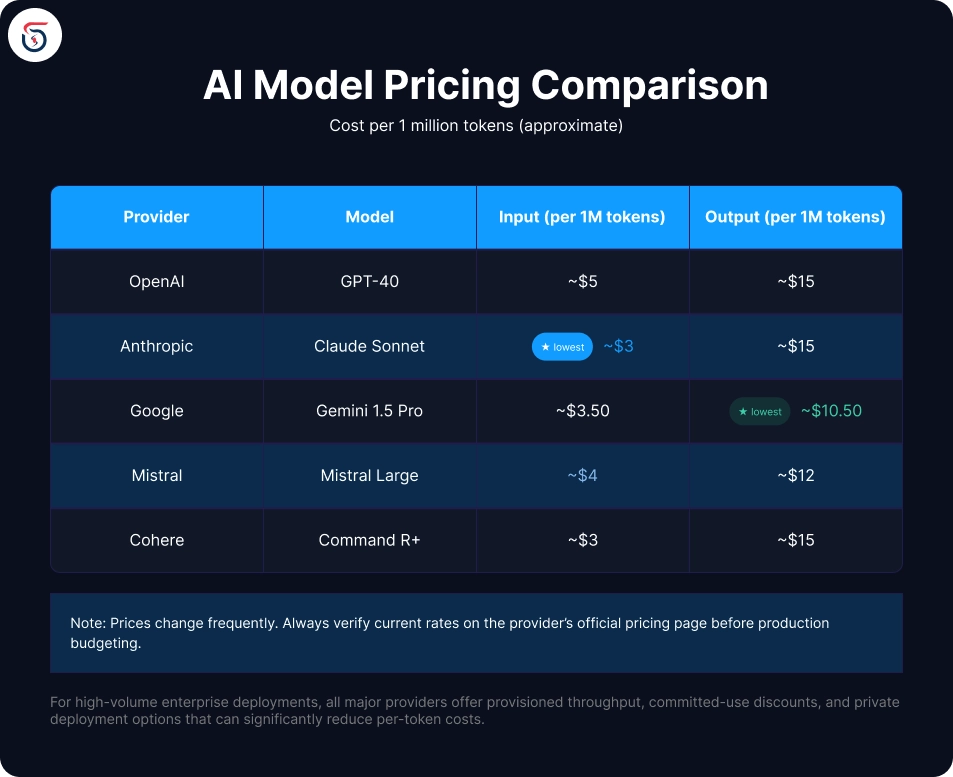

6. Pricing Comparison for Conversational AI Services

7. Which Conversational AI Products Support Multiple Languages?

8. Comparing Conversational AI Platforms for Enterprise Use

What Is a LLM and How Does It Power Conversational AI?

Core Architecture of a Conversational AI System

Top Companies Offering Conversational AI Solutions Powered by LLMs

Anthropic (Claude) Claude models - including Claude Sonnet and Claude Opus - are widely praised for their nuanced reasoning, long-context handling (up to 200K tokens), and strong safety alignment. Claude excels in enterprise document analysis, coding assistance, and complex multi-step reasoning tasks. | OpenAI (ChatGPT / GPT-4o) OpenAI remains the category-defining name. GPT-4o is a multimodal model capable of processing text, images, and audio. The ChatGPT product has the broadest consumer reach, while the API powers thousands of third-party applications. | Google DeepMind (Gemini) Gemini 1.5 Pro boasts the longest context window commercially available (1 million tokens), making it exceptional for document-heavy enterprise use cases. Google's tight integration with Workspace products gives it a strong enterprise distribution advantage. |

Meta (Llama) Meta's Llama 3 family is the flagship open-source LLM, freely available for commercial use. It powers a growing ecosystem of self-hosted and fine-tuned conversational applications, especially popular among teams with data privacy constraints. | Mistral AI A French AI startup offering compact, highly capable open-weight models. Mistral's models are known for strong multilingual performance and efficiency, making them attractive for European enterprises navigating GDPR requirements. | Cohere Focused squarely on the enterprise, Cohere offers models optimized for retrieval-augmented generation (RAG), classification, and semantic search - core capabilities for business-facing conversational AI. |

How to Integrate Conversational AI with Popular Language Model APIs

How Advanced Language Models Enhance User Experience in Chatbots

Pricing Comparison for Conversational AI Services

Which Conversational AI Products Support Multiple Languages?

GPT-4o and Claude 3 both demonstrate strong performance across over 50 languages, with particularly strong results in European languages, Chinese, Japanese, Arabic, and Hindi. Claude has been noted for careful handling of right-to-left scripts. | Gemini 1.5 Pro is designed with multilingual use as a first-class priority, reflecting Google's global translation expertise. It supports over 100 languages. |

Mistral models have notably strong French, German, Spanish, and Italian performance - a deliberate design choice given the company's European roots and customer base. | Llama 3 includes dedicated multilingual variants (e.g., Llama 3.1) fine-tuned to improve non-English performance for open-source deployments. |

Comparing Conversational AI Platforms for Enterprise Use

Want to build a powerful conversational AI solution for your business? Contact the SoftSages team to explore how we can help you design, develop, and scale AI-driven applications.

Table of contents

What Is a LLM and How Does It Power Conversational AI?

Core Architecture of a Conversational AI System

Top Companies Offering Conversational AI Solutions Powered by LLMs

How to Integrate Conversational AI with Popular Language Model APIs

How Advanced Language Models Enhance User Experience in Chatbots

Pricing Comparison for Conversational AI Services

Which Conversational AI Products Support Multiple Languages?

Comparing Conversational AI Platforms for Enterprise Use

Join Our Newsletter

Get the latest tech trends, tutorials and expert analysis delivered straight to your inbox.

FAQs about Conversational AI with Large Language Models

A traditional chatbot follows pre-written scripts and decision trees - it can only respond to inputs it was explicitly programmed to handle. An LLM-powered conversational AI understands natural language, maintains context across multiple turns, handles unexpected phrasing, and generates responses dynamically. The difference is roughly comparable to a vending machine versus a knowledgeable human assistant.

In most cases, no. Prompt engineering and retrieval-augmented generation (RAG) - where relevant documents are injected into the model's context at runtime - deliver strong results for the majority of use cases without the cost and complexity of fine-tuning. Fine-tuning is most valuable when you need the model to adopt a very specific style, domain vocabulary, or structured output format consistently at scale.

Costs depend on usage volume, the model tier selected, and average conversation length. For a low-traffic prototype, monthly API costs can be under \$50. For a high-traffic production application handling millions of conversations, costs can run into thousands of dollars per month. Most providers offer tiered pricing and volume discounts - always prototype with a smaller model first to validate before scaling.

OpenAI's API is widely recommended for beginners due to its extensive documentation, large community, broad framework support (LangChain, LlamaIndex, etc.), and the availability of the Playground for testing prompts without writing code. Anthropic's Claude API is also beginner-friendly with clear documentation and generous context windows.

Yes. Open-source models like Meta's Llama 3 and Mistral can be self-hosted on your own infrastructure, giving you full data sovereignty. Among closed providers, Azure OpenAI Service and Google Cloud Vertex AI allow deployment within your own cloud environment under your data governance policies - a common choice for healthcare, finance, and government sectors.

Most leading LLMs are trained on multilingual datasets and can understand and respond in dozens of languages without any configuration. For production multilingual deployments, it is best practice to test your specific target languages with real domain-specific queries, as performance can vary significantly by language and subject matter even within the same model.

RAG is a technique where the conversational AI retrieves relevant information from an external knowledge base (a vector database, document store, or search index) and includes it in the model's context before generating a response. This allows the AI to answer questions about your proprietary data - internal documentation, product catalogs, customer records - without retraining the model. RAG is currently the most popular architectural pattern for enterprise conversational AI.

Evaluation should cover multiple dimensions: response accuracy (are answers factually correct?), relevance (does the response address the actual question?), tone consistency, latency, safety (does it avoid harmful outputs?), and task completion rate. A combination of automated evaluation (using an LLM-as-judge approach) and human review panels is considered best practice for production systems.